There’s no easy way to test the effect of an earthquake on a building or landscape. The amount of energy is so large and the structures are so complex that computational simulations are the only way to see what is really happening. Researchers from the University of New South Wales (UNSW) have developed much more efficient methods for parallel computing of massive energy propagation problems on supercomputers.

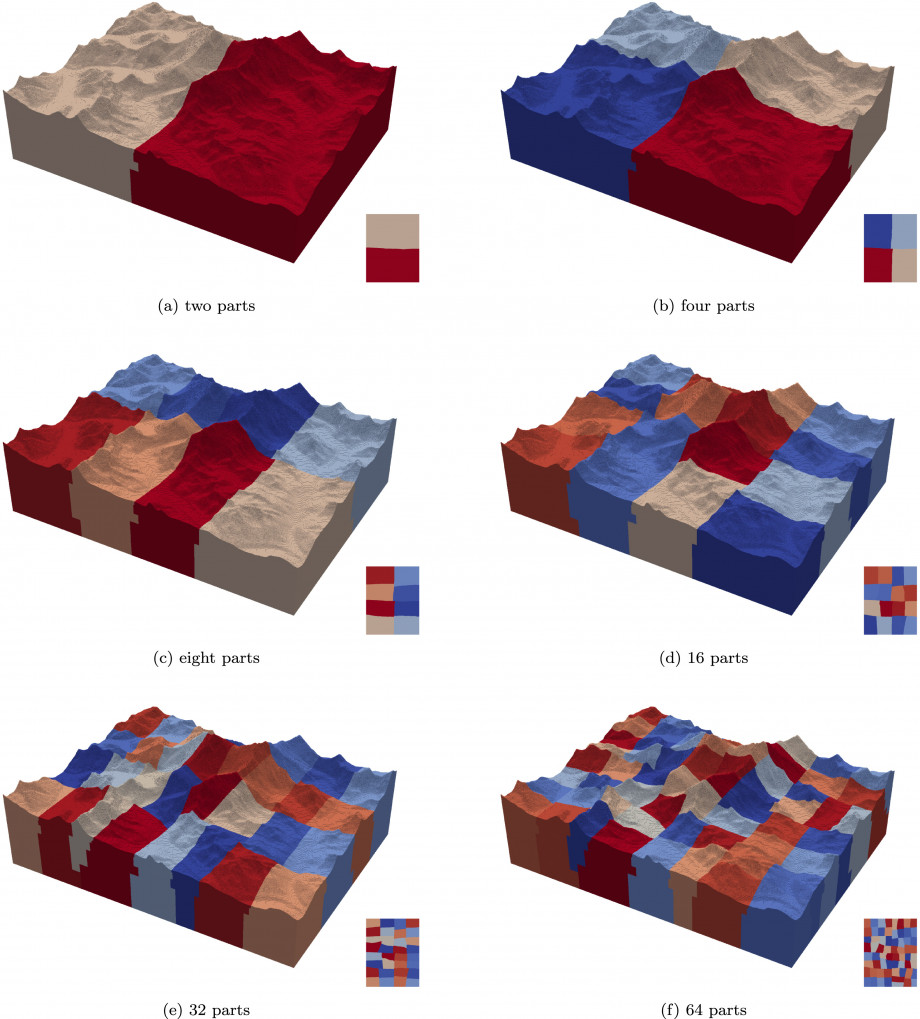

When simulating deformation and energy propagation, whether it is in the landing gear of a plane, the struts of a building, the bedrock during tunnel construction, or a composite panel with complex geometry, researchers produce a three-dimensional mesh that becomes the computational representation of that object. The mesh contains millions or billions of points, each connected to many others with its own set of characteristics. For large objects or ones that need particular detail, calculating the ideal mesh to use is incredibly complex. The UNSW team have devised a more efficient hierarchical meshing algorithm for generating these computational meshes, based on mapping out repeated patterns of the elements in the object they are representing, and developed a powerful complementary solver suitable for parallel computing.

The research team also managed to achieve incredible scalability in their code. Researchers using NCI run specially designed software that splits up the computing task into many smaller chunks. All of the chunks run at the same time on hundreds or thousands of processors. In the idealised case, doubling the number of processors halves the calculation time. In practice however, overheads in the communication between processors slightly reduces the parallel efficiency of any large calculation.

In an unexpected and remarkable result, the computational method the UNSW team has developed actually achieves better than 100% efficiency. They exploited the repeated element patterns in the mesh, reducing the overall memory requirement significantly (down to around 300 gigabytes for a mesh with 1 billion unknowns). By efficiently dividing the dataset of the mesh across up to 16,000 processors, the team made sure that each processor had only a tiny amount of data to handle. A large portion of that data fitted into the cache – the fastest memory system a processor has access to – boosting the efficiency of the solver. These efficiency gains mean that a calculation with double the number of processors takes less than half the time.

Professor Chongmin Song says, “We are excited to be doing this interesting work on the Gadi supercomputer. Gadi allows for simulations with billions of grid points, which is going to be meaningful for researchers in many fields. There is a big potential for future real world applications in earthquakes, crash simulations, construction, advanced manufacturing, and much more.”

Taking advantage of supercomputers for the most impactful computational science requires constant innovations in the software and methods used. Improvements to computational methods build up over time, as research teams around the world develop better and more efficient algorithms. Benefits flow through to all researchers in that area. The University of New South Wales research team is actively developing the scaled boundary finite element method and finding better ways of conducting important simulations at huge scales and incredible resolutions.

Read the research paper on the Science Direct website.